AI avatars, eye gaze, and the return of “presence” in communication

AI has been present in so many conversations lately, from conferences to policy discussions. Some of these have been about how AI could make things more accessible and improve disabled people’s lives. Alongside that, there are ongoing efforts by many to make sure disabled people are not only part of these conversations but are included in designing and developing the next generation of assistive technology. That matters, because it changes what gets built, and why.

Every so often, a technology story comes along that is not really about the technology. It is about a human problem we all recognise, and a fresh attempt to solve it in a way that respects the person.

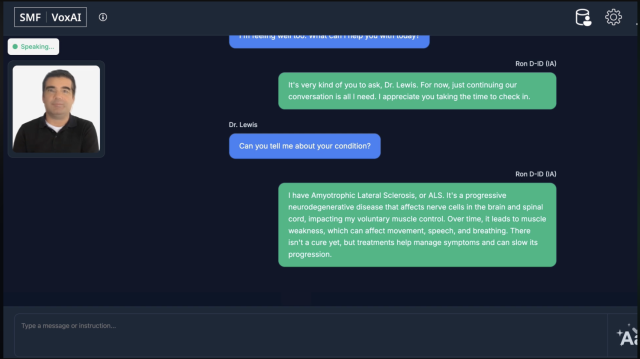

One example of this is SMF VoXAI, developed by the Scott-Morgan Foundation in collaboration with D-ID, an Israeli founded company, alongside other partners including NVIDIA, Lenovo, ElevenLabs and Irisbond.

What is SMF VoXAI?

SMF VoXAI is described as a communication system for people with severe communication disabilities, including people who cannot rely on speech and may have very limited movement. It was publicly presented on the 10th of December 2025 at the AI Summit in New York.

One detail that stood out to me is the involvement of Bernard Muller, the Scott-Morgan Foundation’s Chief Technologist, who lives with motor neurone disease (MND). In the launch materials, he is described as having architected the system using only eye tracking (eye gaze), which is a reminder that this is not only a technology story, but also a story about who gets to shape what is built.

On the Scott-Morgan Foundation website they also have an interactive avatar of Bernard that you can speak to. It responds in real time. It is a simple way of showing what they mean by ‘presence’ and why this is more than just generating text on a screen. To try it yourself, scroll to the ‘This is what co-design actually looks like’ section on the Scott-Morgan Foundation website.

If you would like a bit more context, the Scott-Morgan Foundation has a short YouTube video that explains the idea behind VoXAI and shows an example of how it works.

Watch on YouTube: From Isolation to Agency (Scott-Morgan Foundation)

The system combines eye tracking with an AI-supported communication layer, AI-voice, and an expressive on-screen avatar. The aim is to reduce the delay and effort that can make communication feel slow and exhausting, and to bring back more of the flow of natural conversation.

During the December 2025 launch, it was also mentioned that a two-year research study would be conducted across six countries, looking at the impact of AI avatars on quality of life for people with communication disabilities. It will be interesting to see what comes out of that over time.

A lot of the attention has been focused on the idea of a “digital twin” described as a personalised, photorealistic avatar intended to preserve appearance and identity as a condition progresses.

VoXAI comes from the Scott-Morgan Foundation, which grew out of Dr Peter Scott-Morgan’s work after his diagnosis. The Foundation has continued that work since his death in 2022. For many people living with progressive conditions, the question is not only whether they can communicate, but whether they can keep a sense of identity and social presence as things change.

What problem is it trying to solve?

For many people who use AAC, communication works, but it is rarely quick. Even when someone has a reliable way to select words, the process takes time, physical effort and concentration, and that has a cost. It can be tiring. It can be frustrating. It can also change how other people respond, especially in fast-moving conversations.

Delays affect the rhythm of interaction. It can be harder to jump in at the right moment, harder to keep up with humour, and harder to say something before the topic has moved on. Over time, that can shape relationships, not because someone has nothing to say, but because the conversation does not easily make space for them.

VoXAI is being presented as an attempt to reduce that gap, by bringing back more of the sense of “presence” in communication. In other words, not only producing the right words, but doing it in a way that feels closer to real conversation.

A digital twin, and the questions it raises

The idea of a “digital twin” is part of what has drawn attention to VoXAI. Put simply, it is being described as a photorealistic avatar created from images or video captured while the person could still move or speak, with the aim of preserving appearance and identity as a condition progresses.

It is a compelling idea, but it also raises difficult questions. Who controls the images and the avatar. How consent is captured and revisited over time. What happens if the person’s preferences change. What a family can or cannot request. What happens after death. And, most importantly, whether the person remains in control of how they are represented.

These are not reasons to dismiss the idea. They are reasons to treat it carefully. If this is going to become a real part of AAC and assistive communication, it needs to be built around the person’s control, not only around what is technically possible.

Cost and access

One practical detail mentioned in the launch announcement is pricing. The Foundation describes a freemium model, with basic access offered free, and premium features listed at $30 per month. If that remains the case, it is an interesting contrast to the cost of some high-end AAC solutions, but it also introduces the usual questions that come with subscription models: who pays long term, what happens if a subscription lapses, and how this fits with procurement and funding in real services.

Final thoughts

What I find interesting about VoXAI is not simply the use of AI, or the fact that it can generate an expressive avatar, but the shift in emphasis. It is treating conversational flow, identity, and social presence as part of the communication challenge, not as optional extras.

This is not a replacement for every AAC system, and it does not need to be. For some people, a familiar, stable AAC approach will remain the best fit. For others, especially where conditions are progressive, the promise of preserving identity and presence may be particularly meaningful.

If there is a wider lesson here, it is the same one that comes up again and again. The people who live with these realities need to be involved early, listened to properly, and part of the decisions, not just the demonstrations. That is how we end up with technology that genuinely helps, rather than technology that simply looks impressive.

Get in touch

As always, I am keen to hear how you are using AAC, mobile, and other assistive technology in your setting, and whether AI is starting to come into those conversations too. If you would like a particular topic covered in the next newsletter, please let me know. Finally, please feel free to contact me if you have a question or need technical help and support.

Article meta data

Clicking on any of the links in this section will take you to other articles that have been tagged in the same category.

- Featured in the Karten Winter 2026 Newsletter

- This article is listed in the following subject areas: Technology, Update from Technology Advisor